Overview

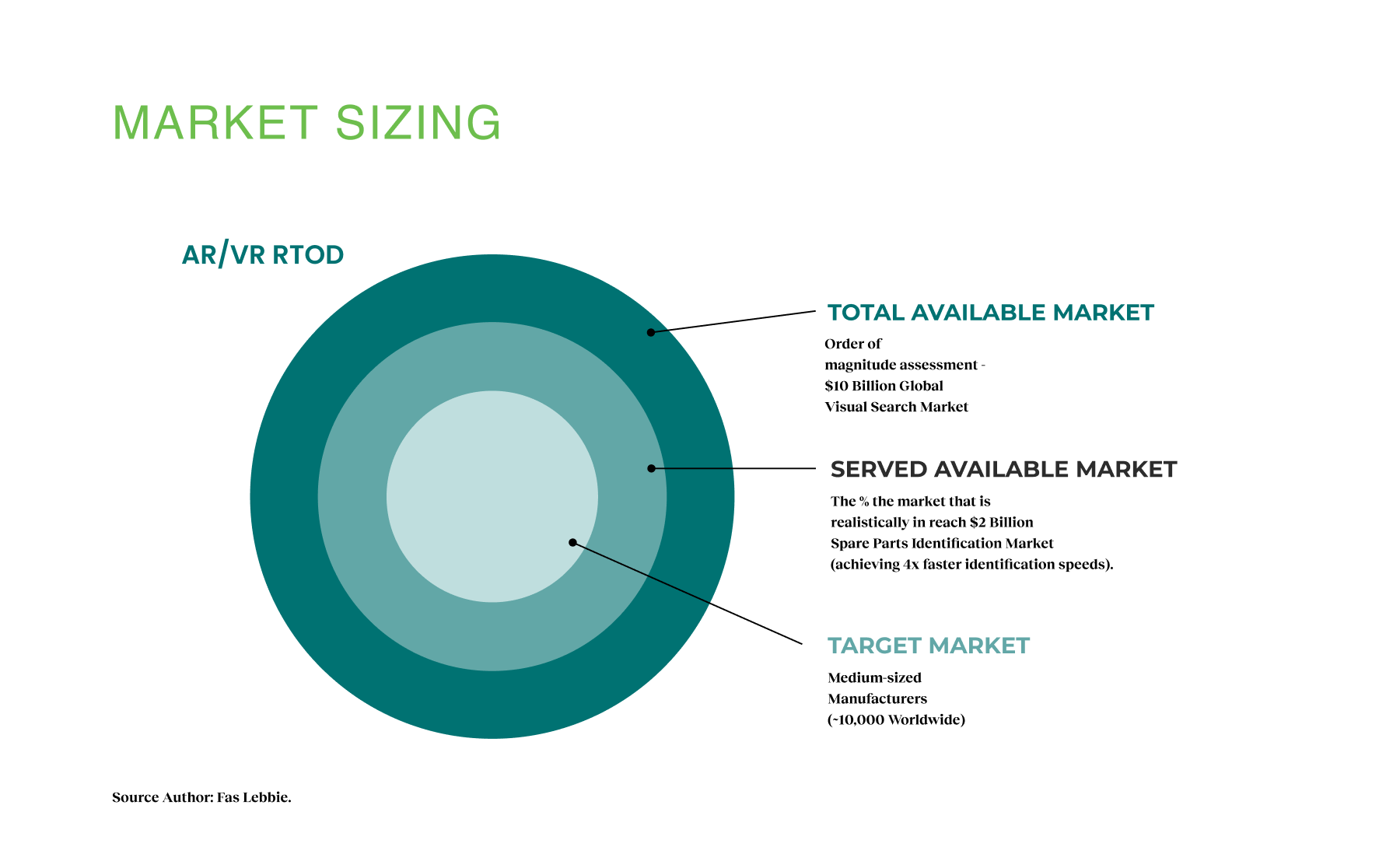

At PTC, I collaborated with the innovation team to launch a Real-Time Object Detection (RTOD) system that improved how service technicians identify and manage service spare parts. Using artificial intelligence and augmented reality, workers can now instantly recognize equipment components by simply taking a photo, eliminating hours of manual catalog searches. Integrated with Windchill PLM systems and deployed across mobile, tablet, HoloLens, and RealWear devices, this platform disrupted a $2 billion spare parts catalog industry by achieving 4× faster recognition with 90% accuracy across industrial environments.

Research & Design

Design-led research · Market analysis · Product Experience Design · XR interface design · AI model training · Cross-platform AR integration

- Duration: February 2021-August 2022

- Partners: PTC, Vuforia, Azure, TensorFlow

- Team: Fas Lebbie, Dr. Eva Agapaki, PTC Innovation Runway Team

Confidentiality: This case study reflects my perspective while keeping elements of PTC’s confidential. Specific details have been modified to showcase my design approach.

WHAT I BROUGHT

Conducted design-led research across four verticals to identify leverage points and validate a $2B disruption opportunity.

Designed intuitive XR interfaces across mobile, tablet, and AR platforms, and delivered AR prototypes integrated with PTC’s Windchill PLM, enabling scale across 30,000 enterprise customers in industrial environments.

I directed PTC's Innovation Runway initiative, managing 7-10 FTE teams with $1.7M budget to deliver breakthrough AR solutions adopted by Porsche, Liebherr, and major manufacturers.

My Approach

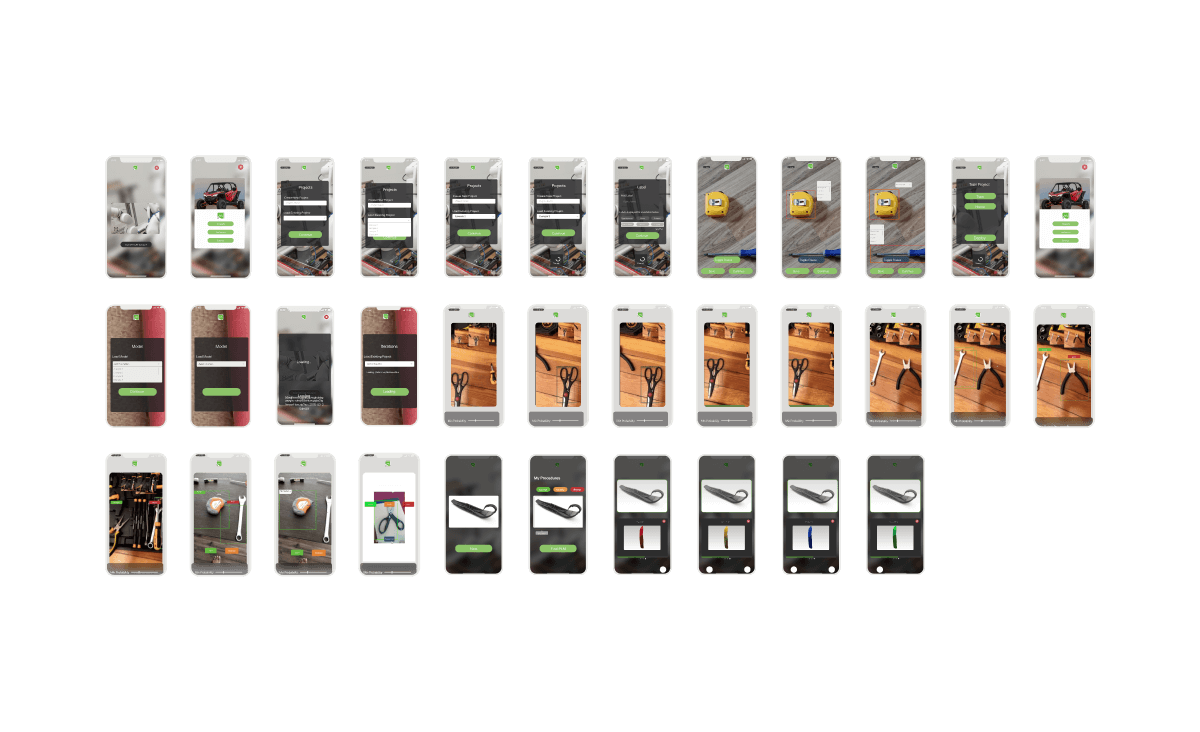

We began by mapping the parts management ecosystem through research into manual processes, competitors, and verticals. This research-first methodology shaped our design direction and ensured feasibility by aligning with PTC’s CAD infrastructure and training content, narrowing potential parts to a workable set of 20–200. Building on a base of 30,000 enterprise customers, we leveraged existing 3D imaging workflows and designed AR features optimized for smartphones. From AI training models to intuitive cross-platform AR interfaces, each feature was built to simplify identification, reduce friction, and improve productivity across diverse industrial environments.

Design Process

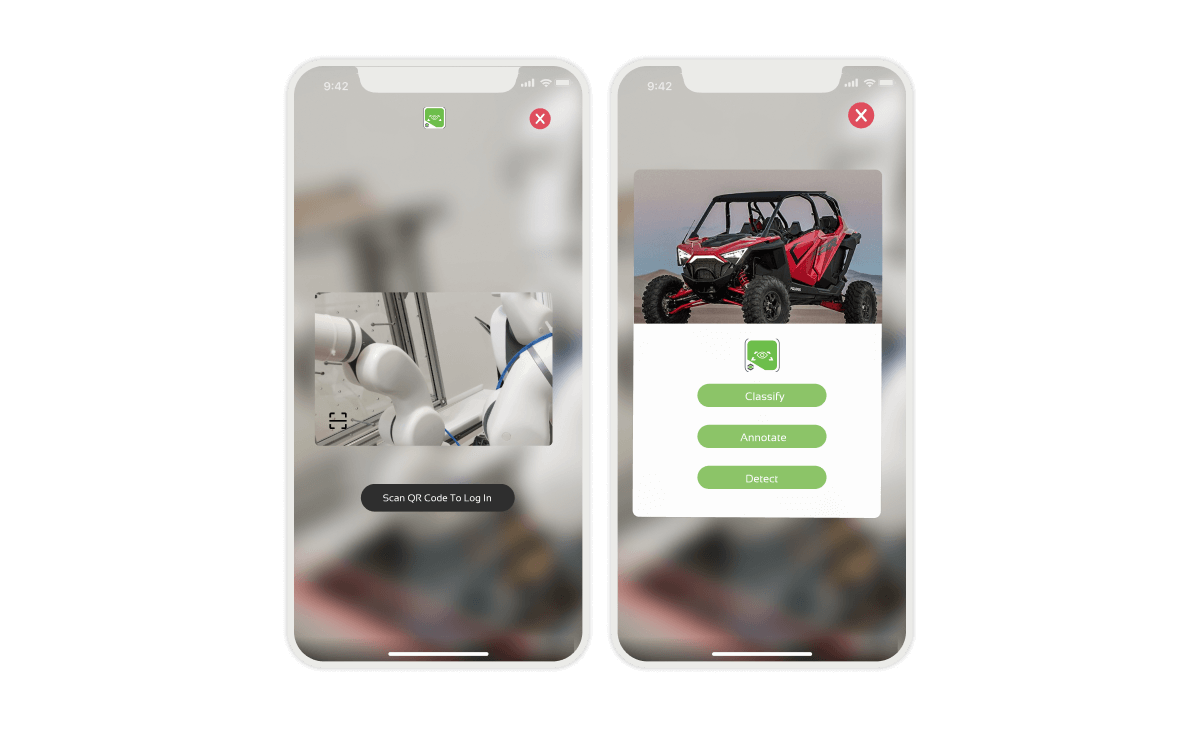

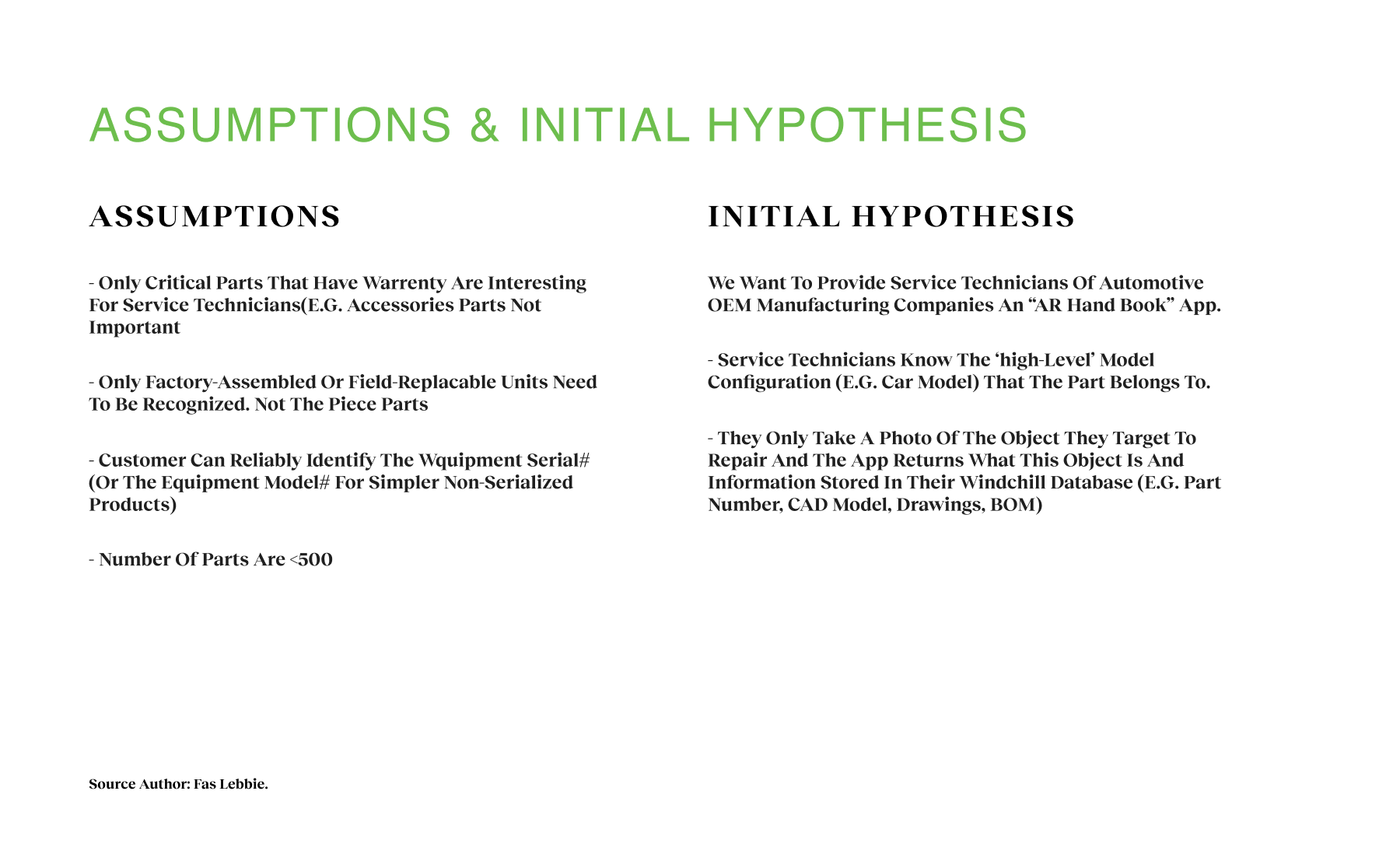

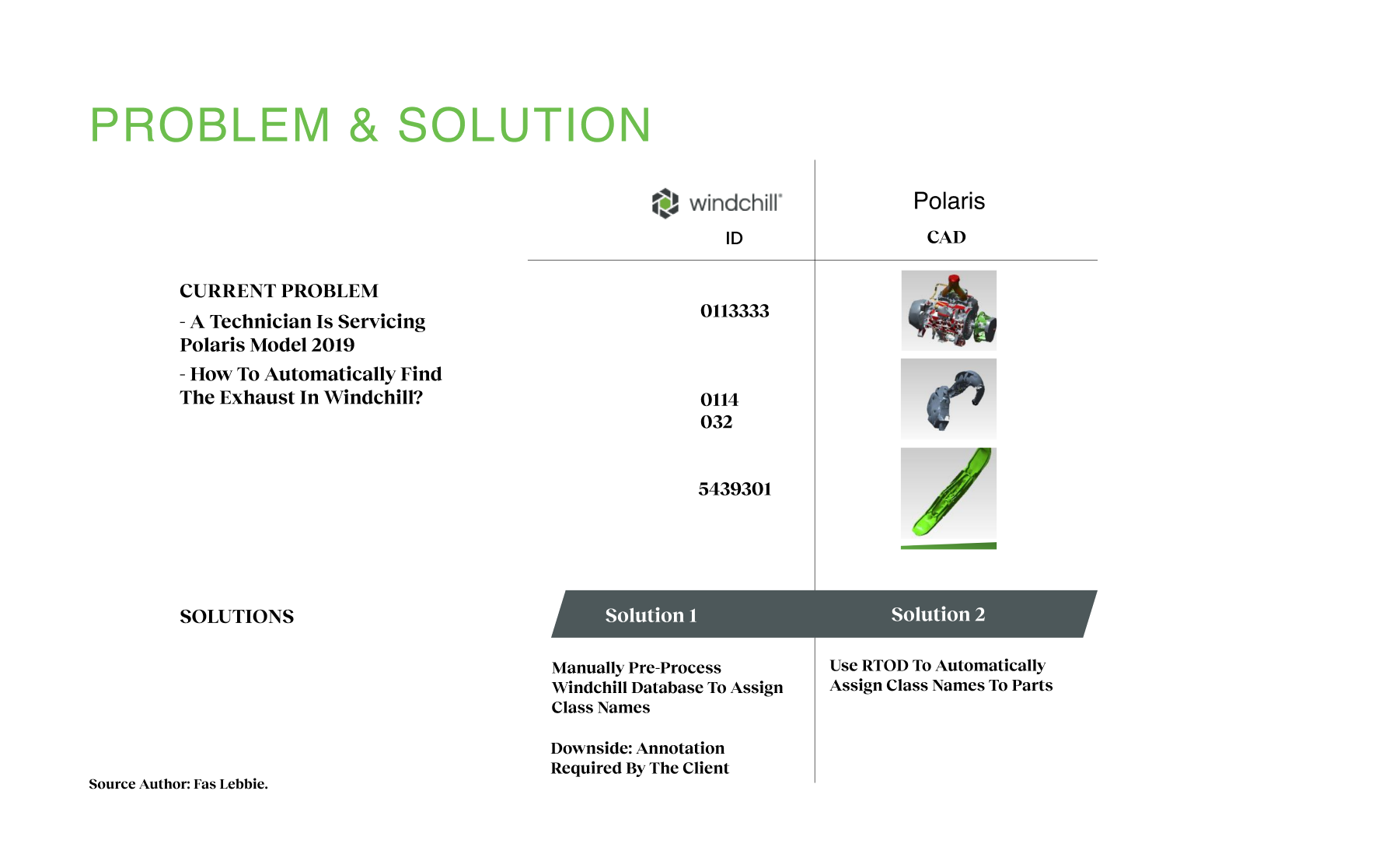

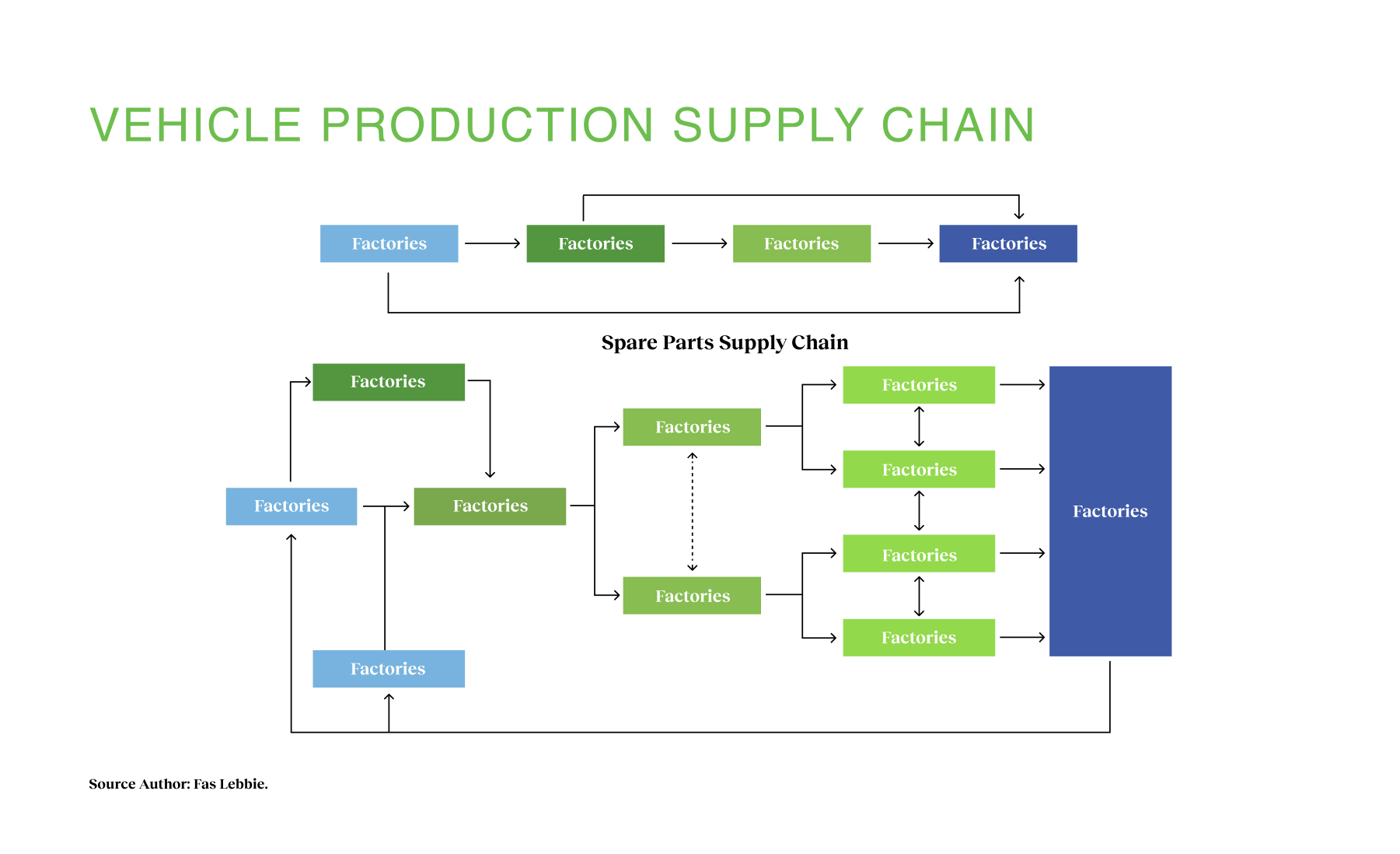

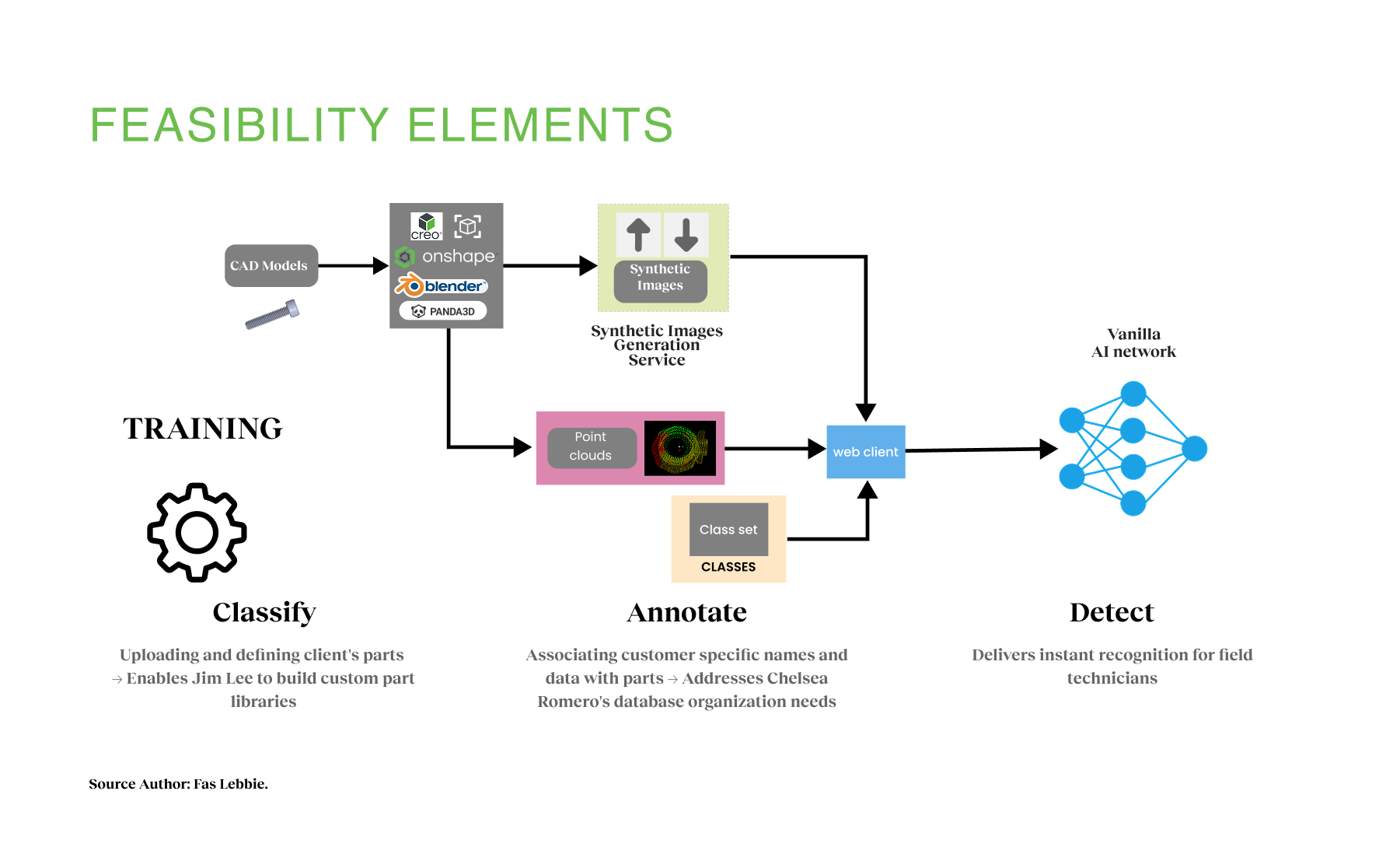

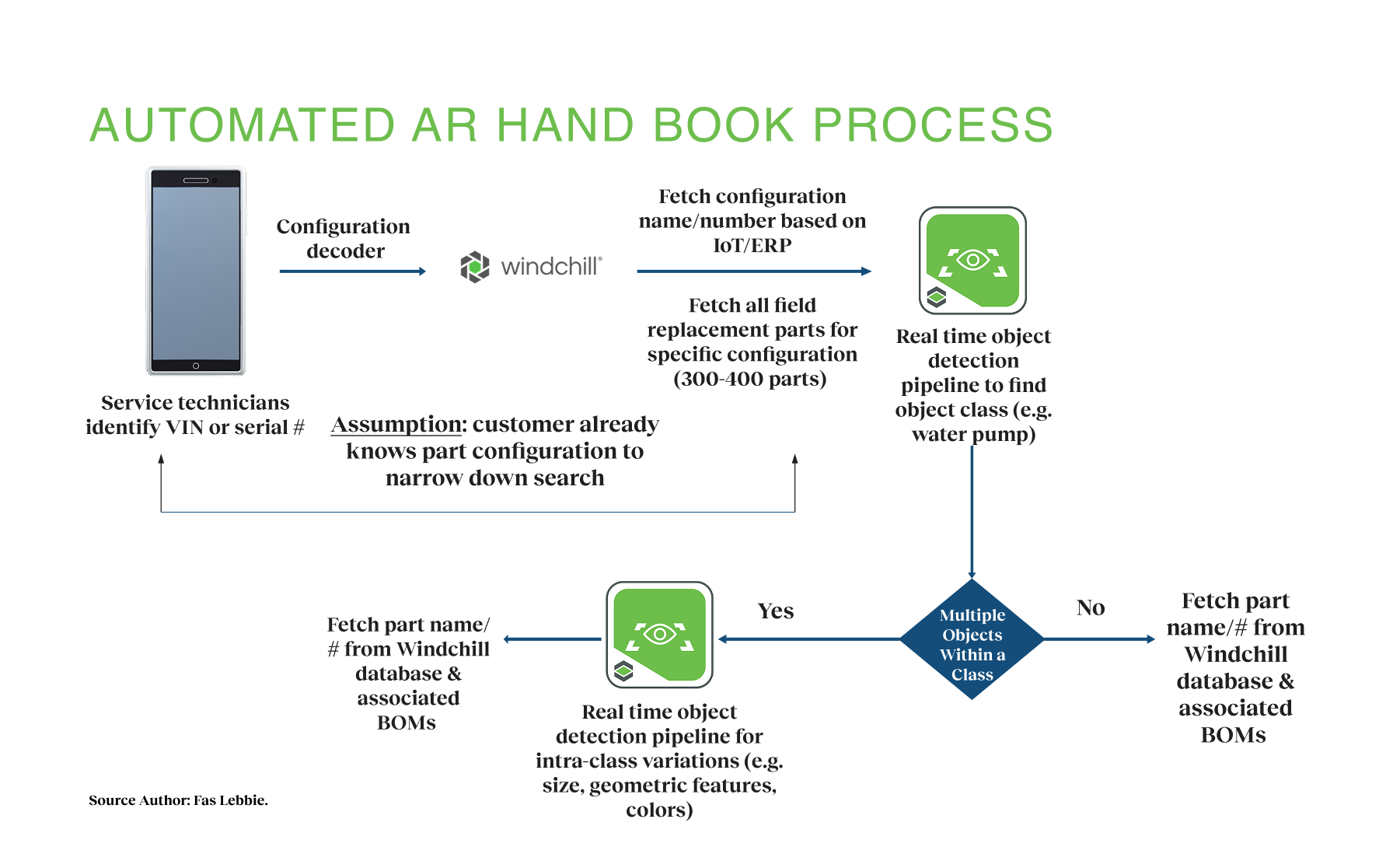

The research explored four key areas: current solutions, the competitive landscape, technological verticals, and hypothesis formulation. We first documented the manual process used by service technicians, who rely on old part books to identify components for repair before purchasing from online retailers. This process is time-consuming, risks ordering the wrong parts, and requires manuals in the worker’s primary language. We examined the global visual search market (valued at $10 billion in 2018 and projected to reach $28-60 billion by 2027), identifying $2 billion related explicitly to service technicians’ and factory workers’ needs. Key players like ASOS, Ted Baker, and eBay were leveraging similar retail technologies. ASOS’s style match allows purchases based on outfit images, Ted Baker launched shoppable videos, and eBay implemented visual search technology. Our analysis of technological verticals revealed that the automotive industry had the greatest potential due to the need to recognize individual parts within complex machines. We identified three primary end-user groups: factory operators, service technicians, and customers performing their own maintenance. Medium-sized manufacturers emerged as our target market due to their quantity (approximately 10,000 worldwide), high revenues ($400M-$10B), and desire for efficiency improvements. By understanding these pain points and market opportunities, we established our baseline to create a solution that digitizes and streamlines this process through visual recognition technology. This research guided our technical approach, leading to a three-stage process: classify (uploading and defining client’s parts), annotate (associating customer-specific names and data with parts), and detect (reliably identifying parts through AI).

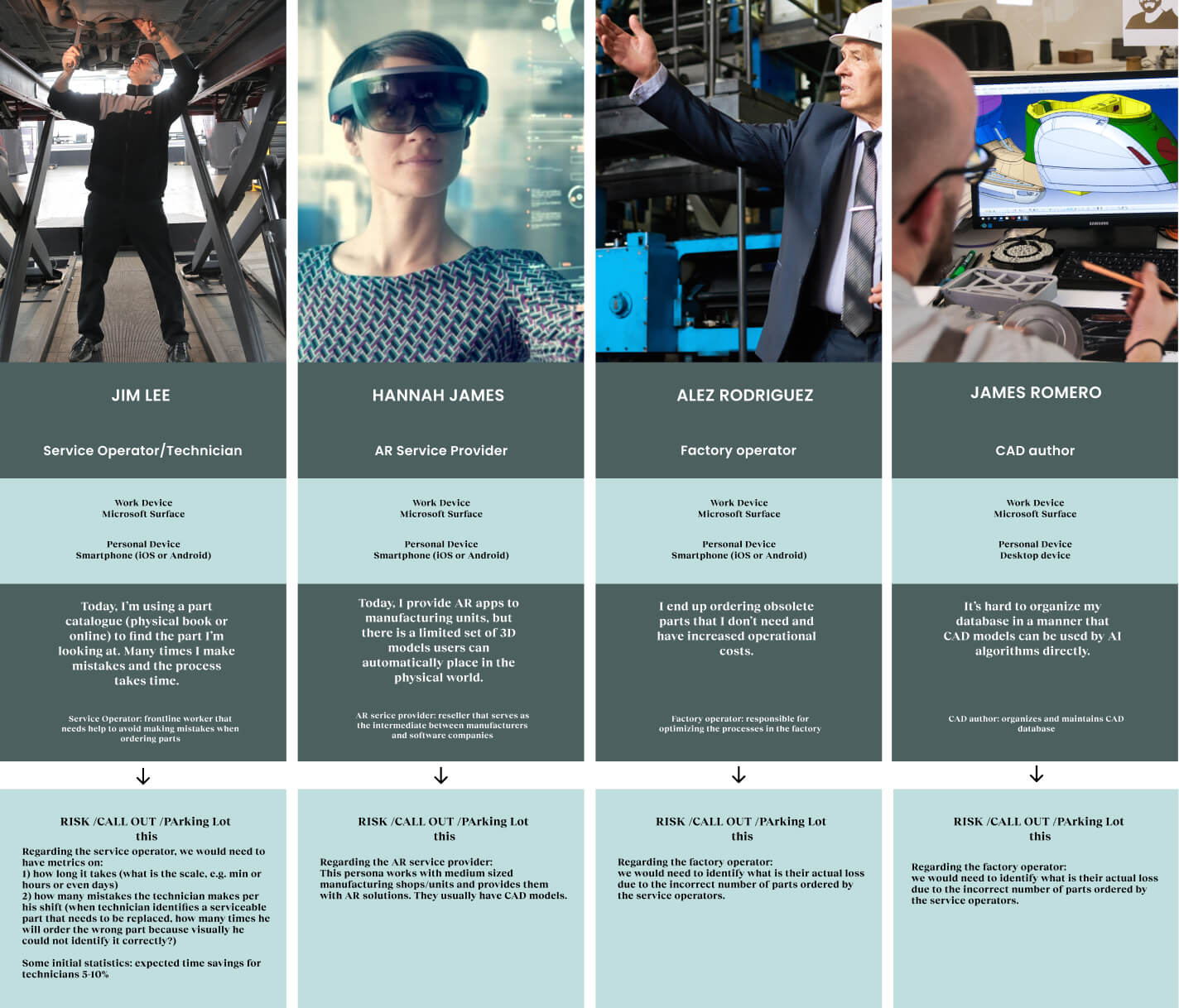

Our investigation revealed that PTC/Vuforia should intervene in this industry for several key reasons. PTC already had the capacity to provide end-to-end Create-Manage-Deliver service information on spare parts. This efficiency was due to its established CAD infrastructure, which spanned authoring, management, and delivery of all needed content types. PTC already possessed training content that could help the system establish a reasonable universe of objects and narrow down potential parts to 20-200. Additionally, AR technology was feasible for ubiquitous smartphone technology held by service technicians, and PTC had a customer base of 30,000 who regularly worked with CAD and 3D imaging, providing an immediately accessible market. Through our user research, we identified four specific personas with distinct jobs to be done. These insights directly shaped our product requirements and user experience design:

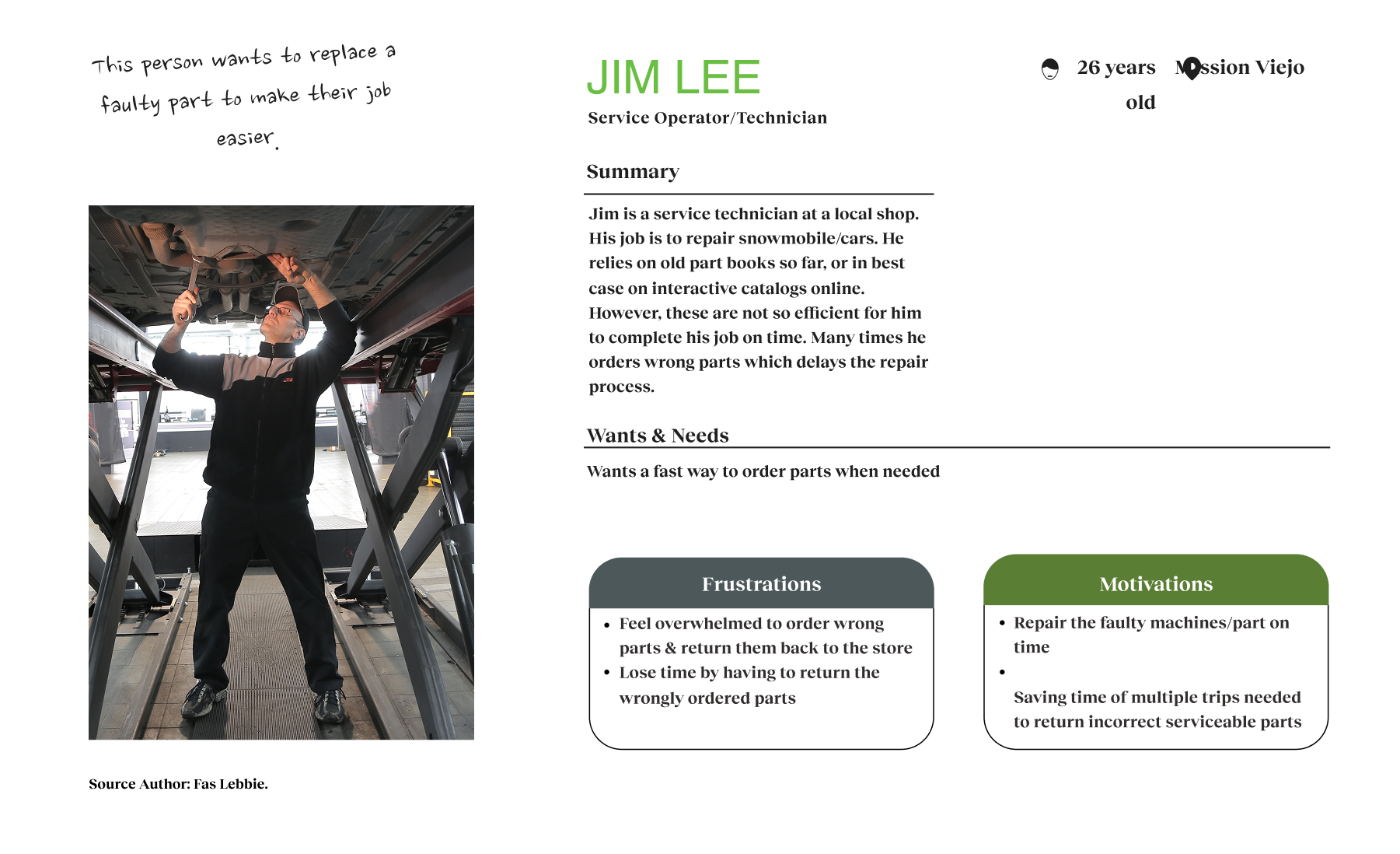

- Jim Lee (Service Operator/Technician)

- “Today, I’m using a part catalog (physical book or online) to find the part I’m looking for. Many times, I make mistakes, and the process takes time.”

- Needs help to avoid making mistakes when ordering parts.

- Hannah Jamser (AR Service Provider)

- “Today, I provide AR apps to manufacturing units, but there is a limited set of 3D models users can automatically place in the physical world.”

- Serves as the intermediary between manufacturers and software companies.

- Alez Rodriguez (Factory Operator)

- “I end up ordering obsolete parts that I don’t need and have increased operational costs.”

- Responsible for optimizing the processes in the factory.

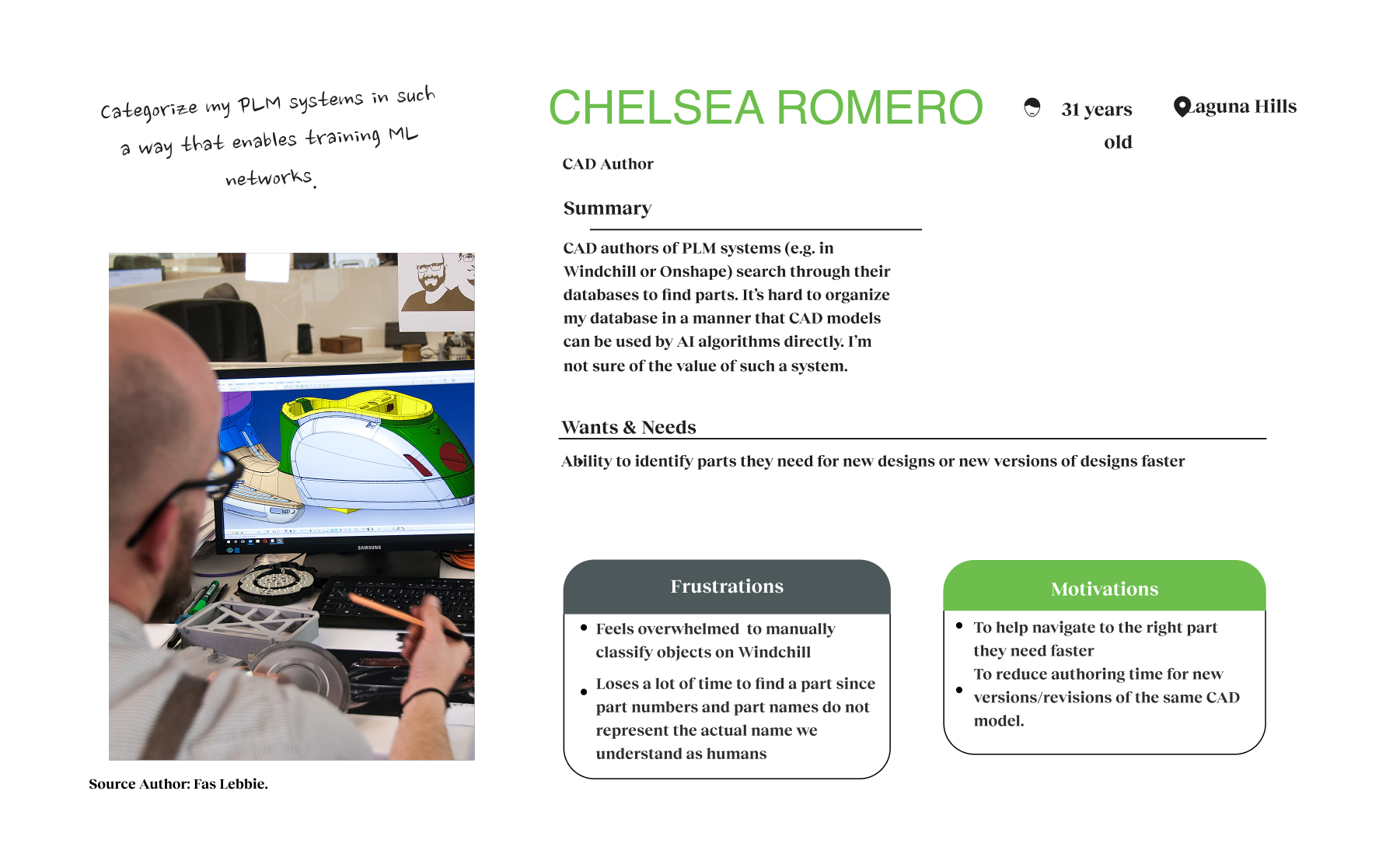

- James Romero (CAD Author)

- “It’s hard to organize my database so that CAD models can be used by AI algorithms directly.”

- Organizes and maintains CAD database.

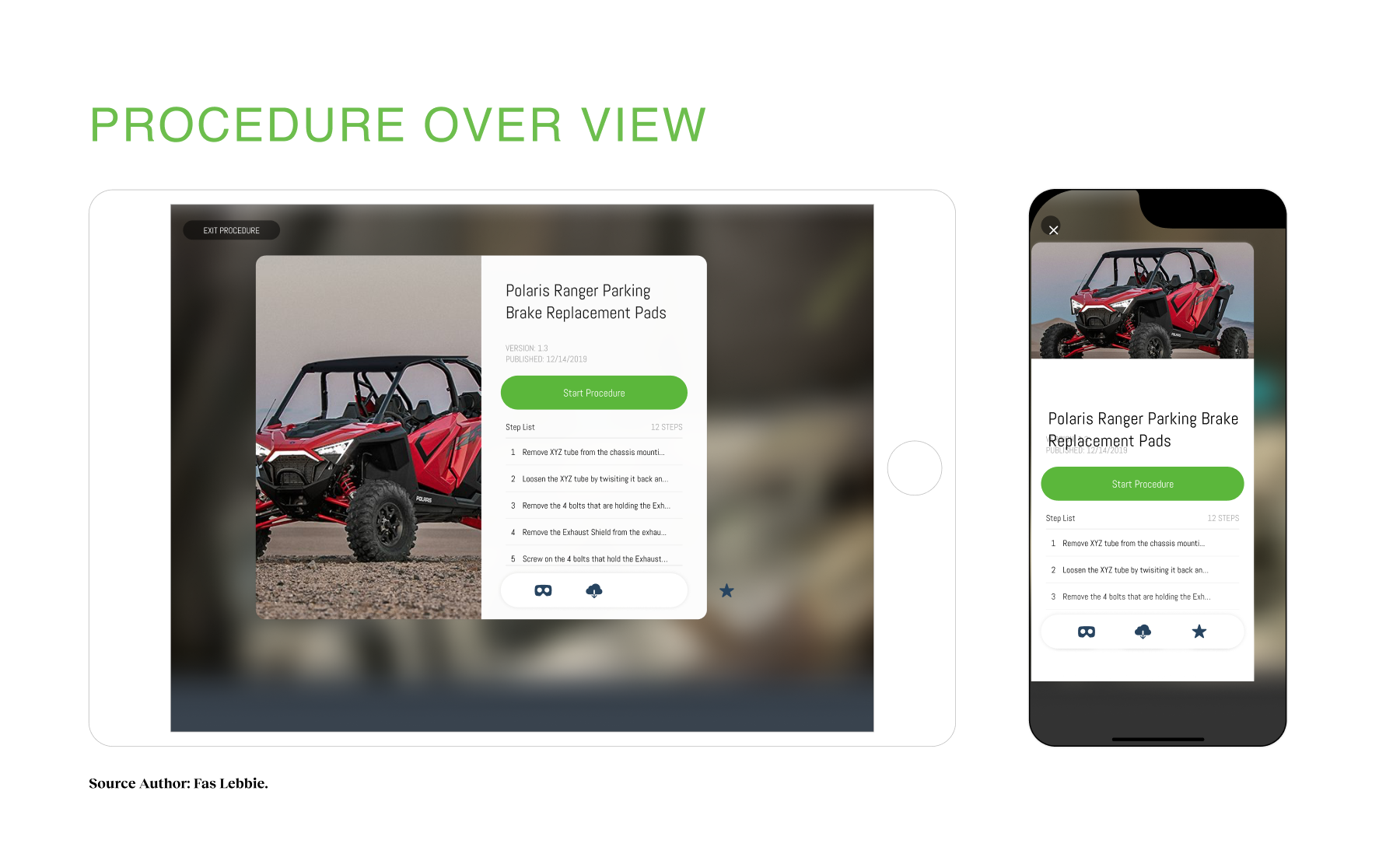

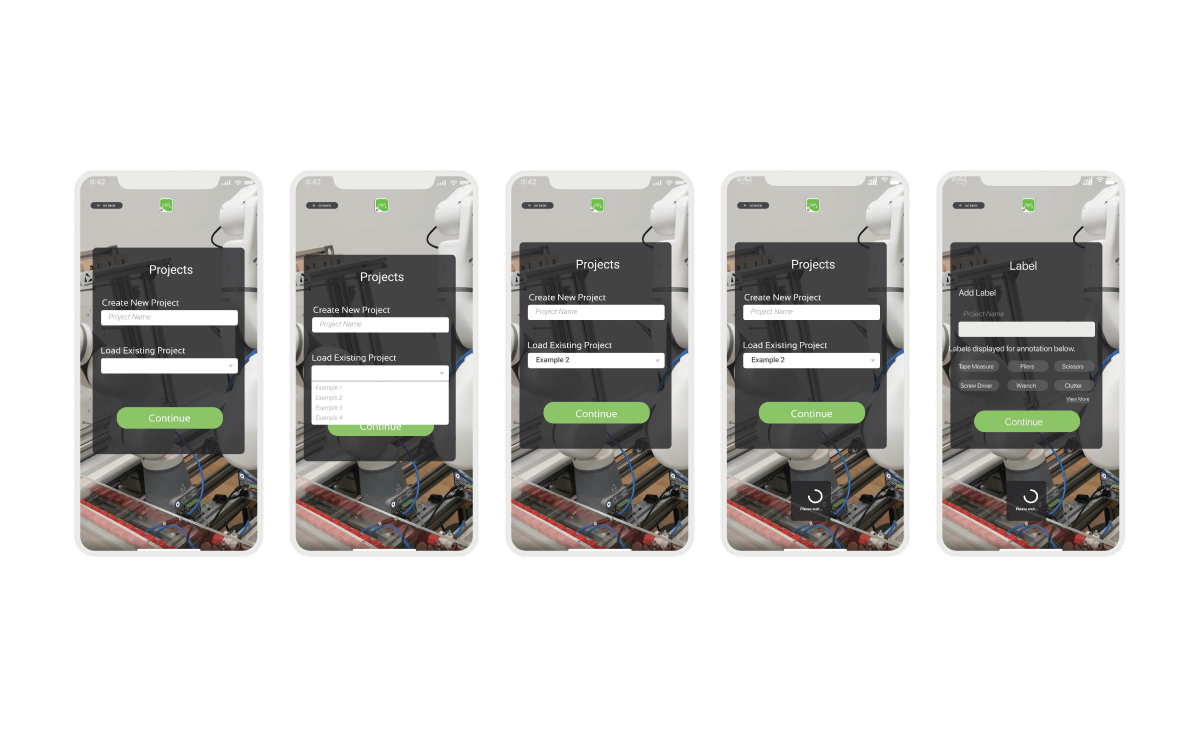

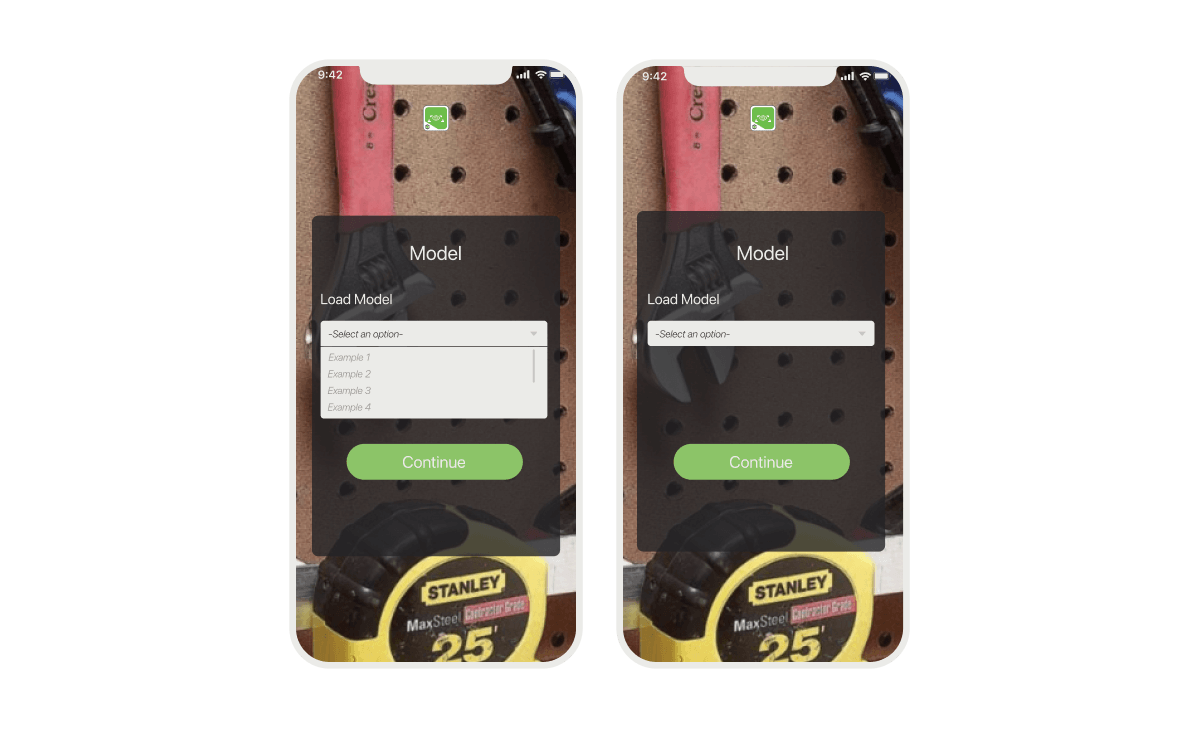

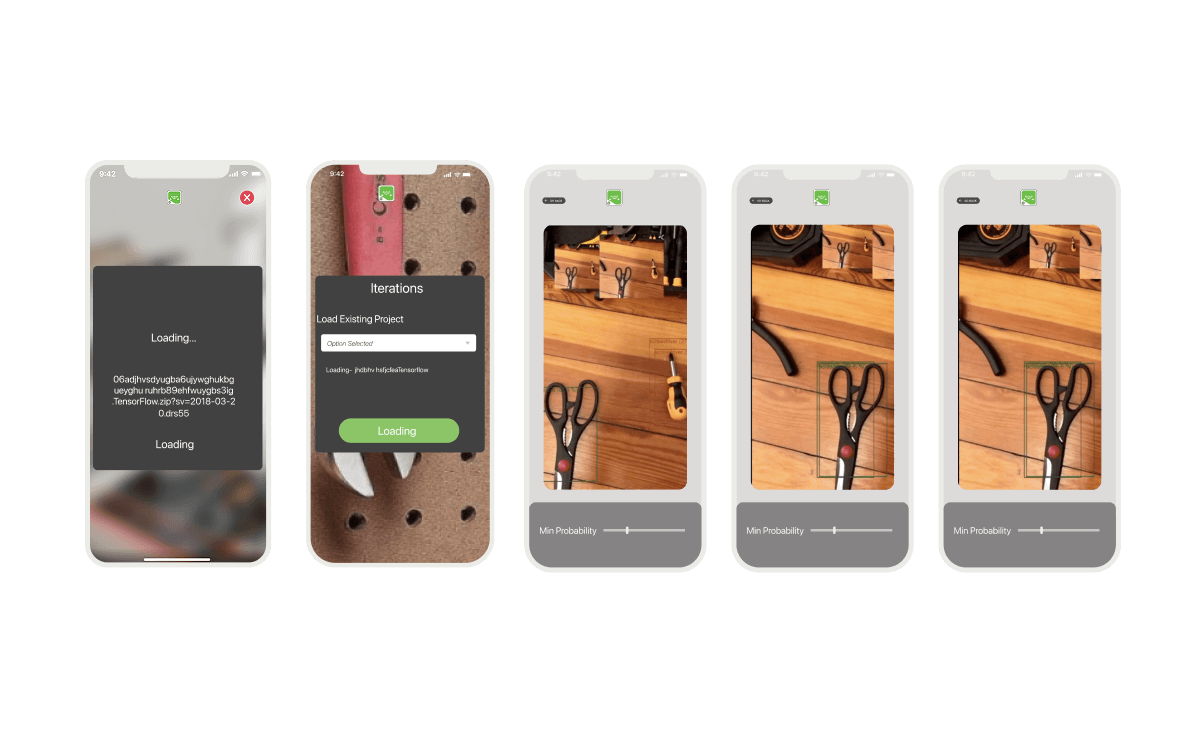

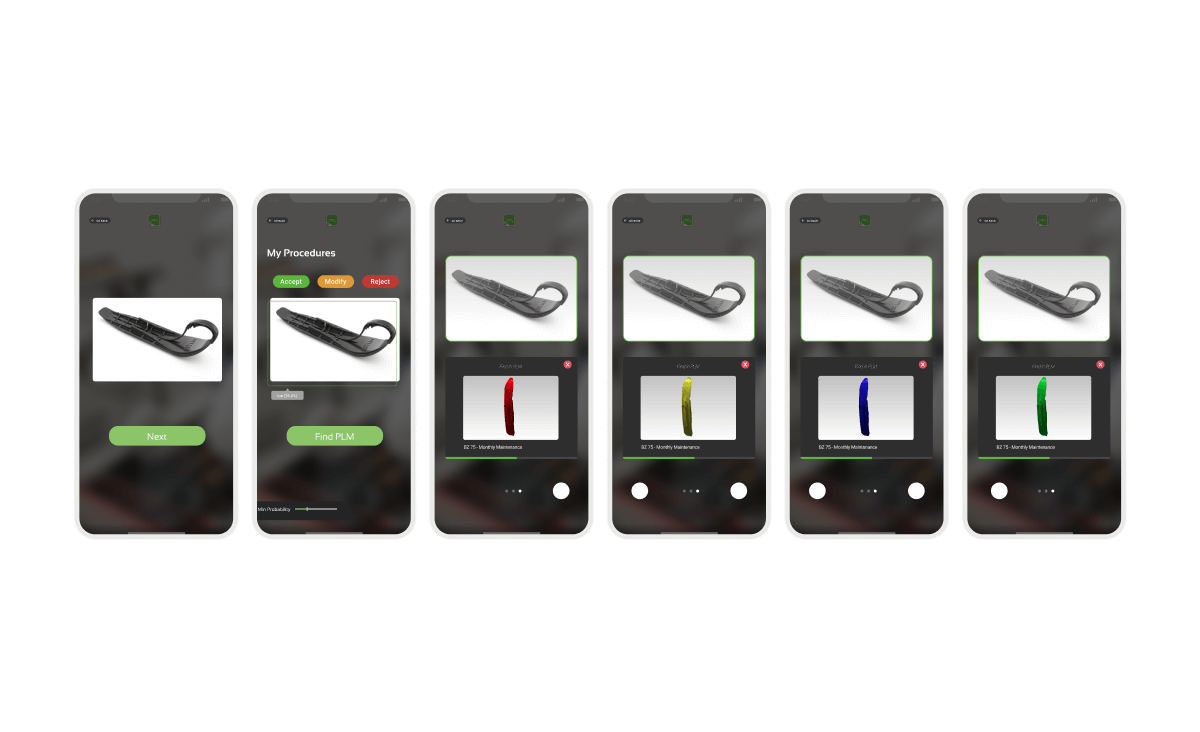

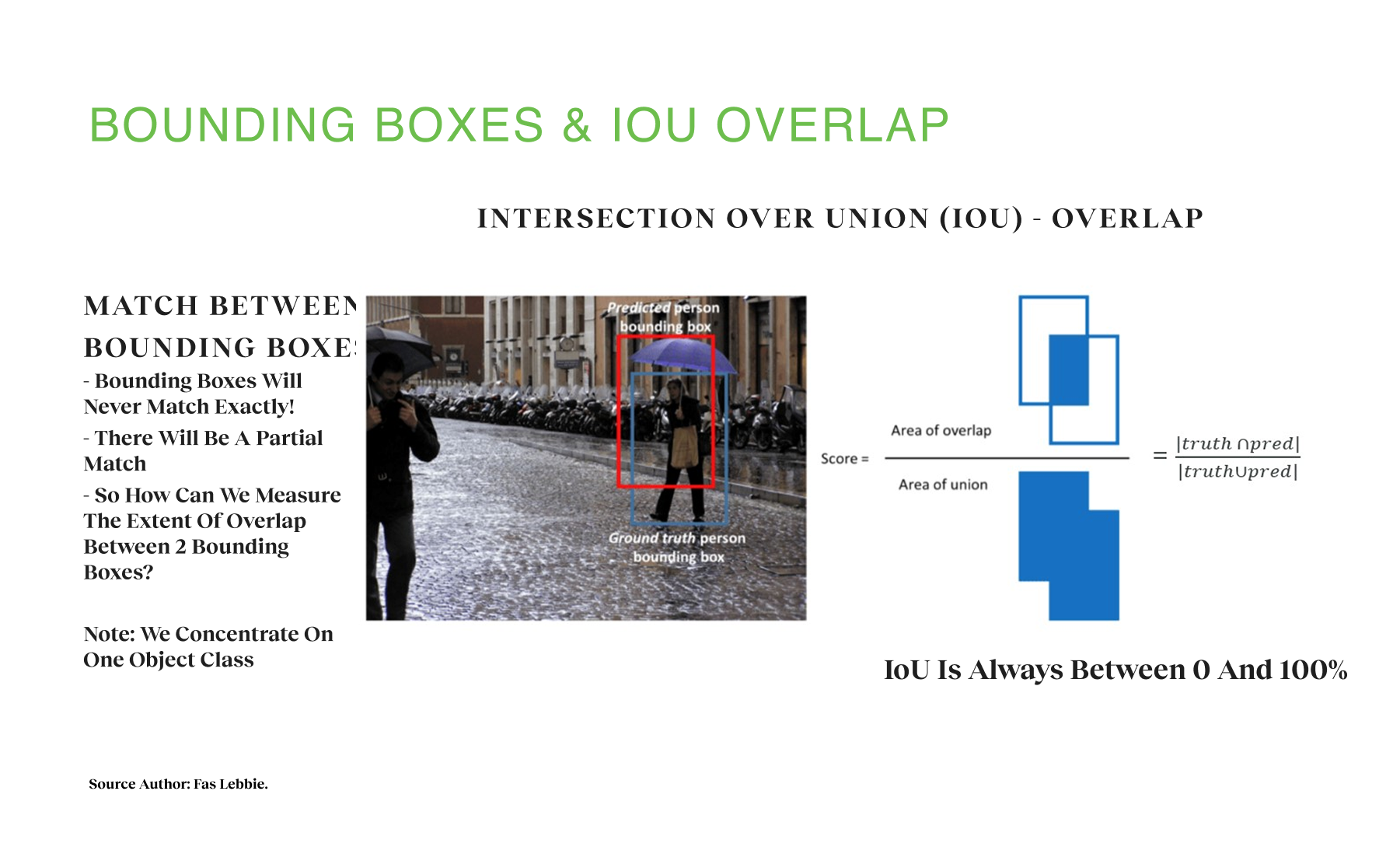

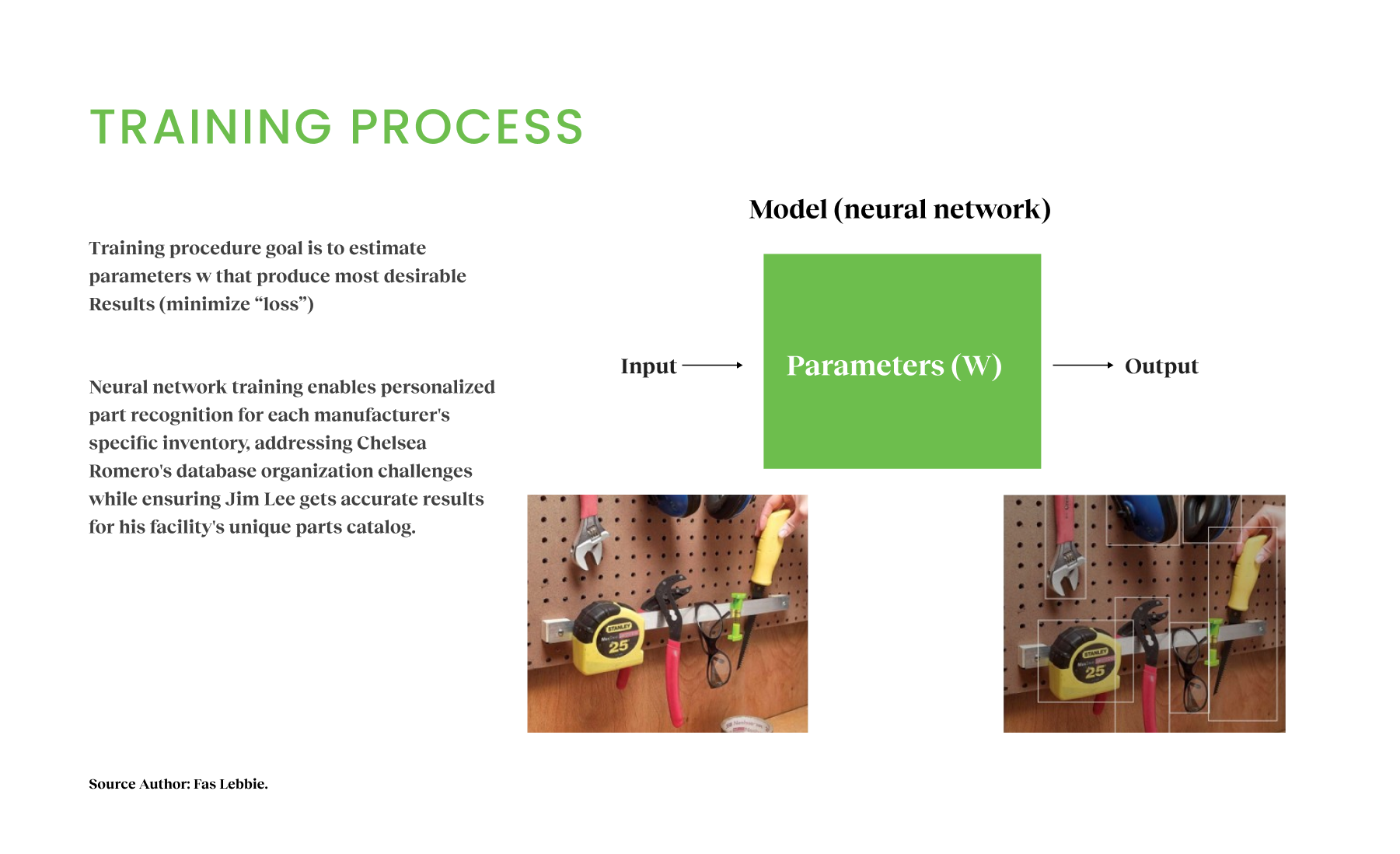

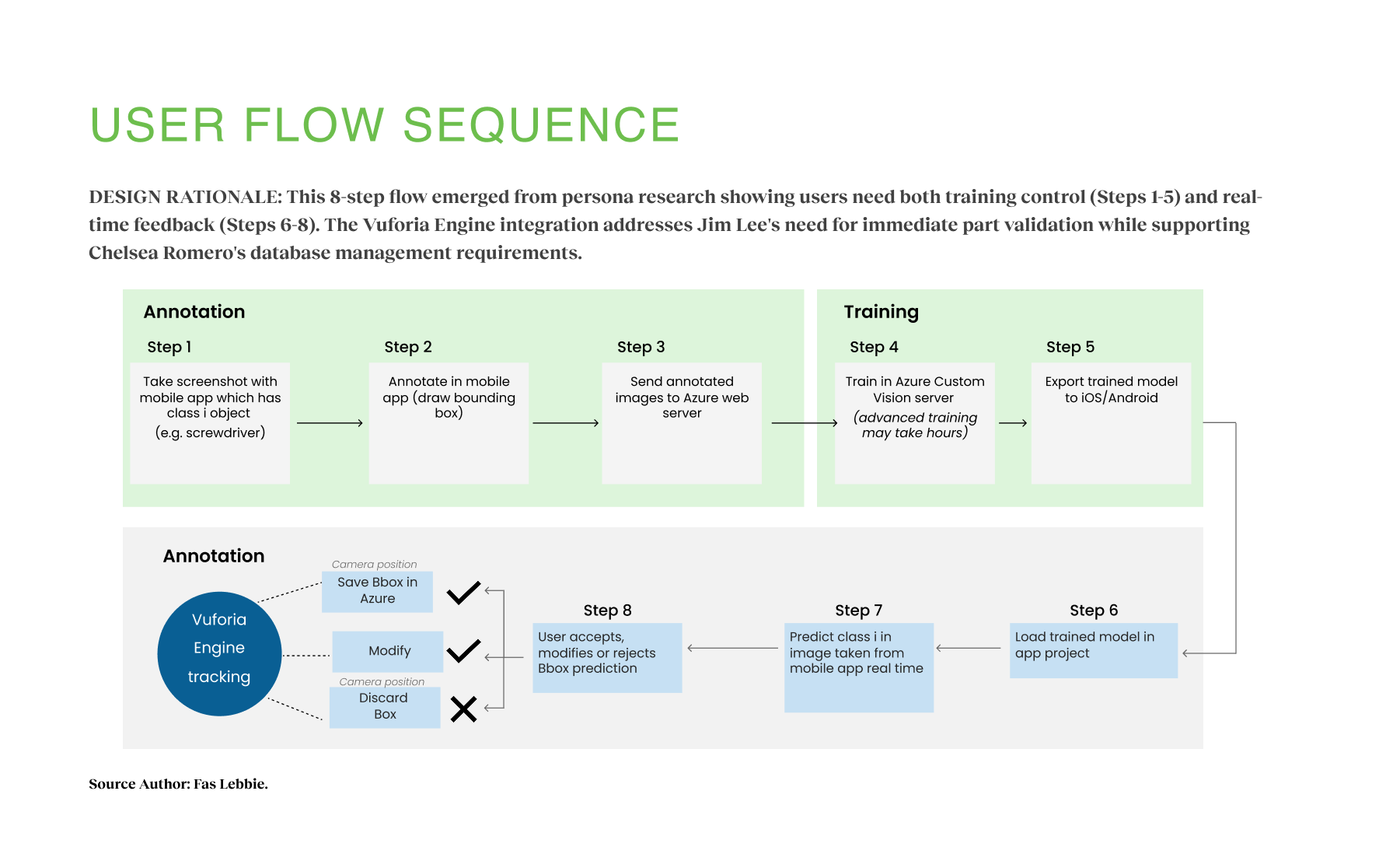

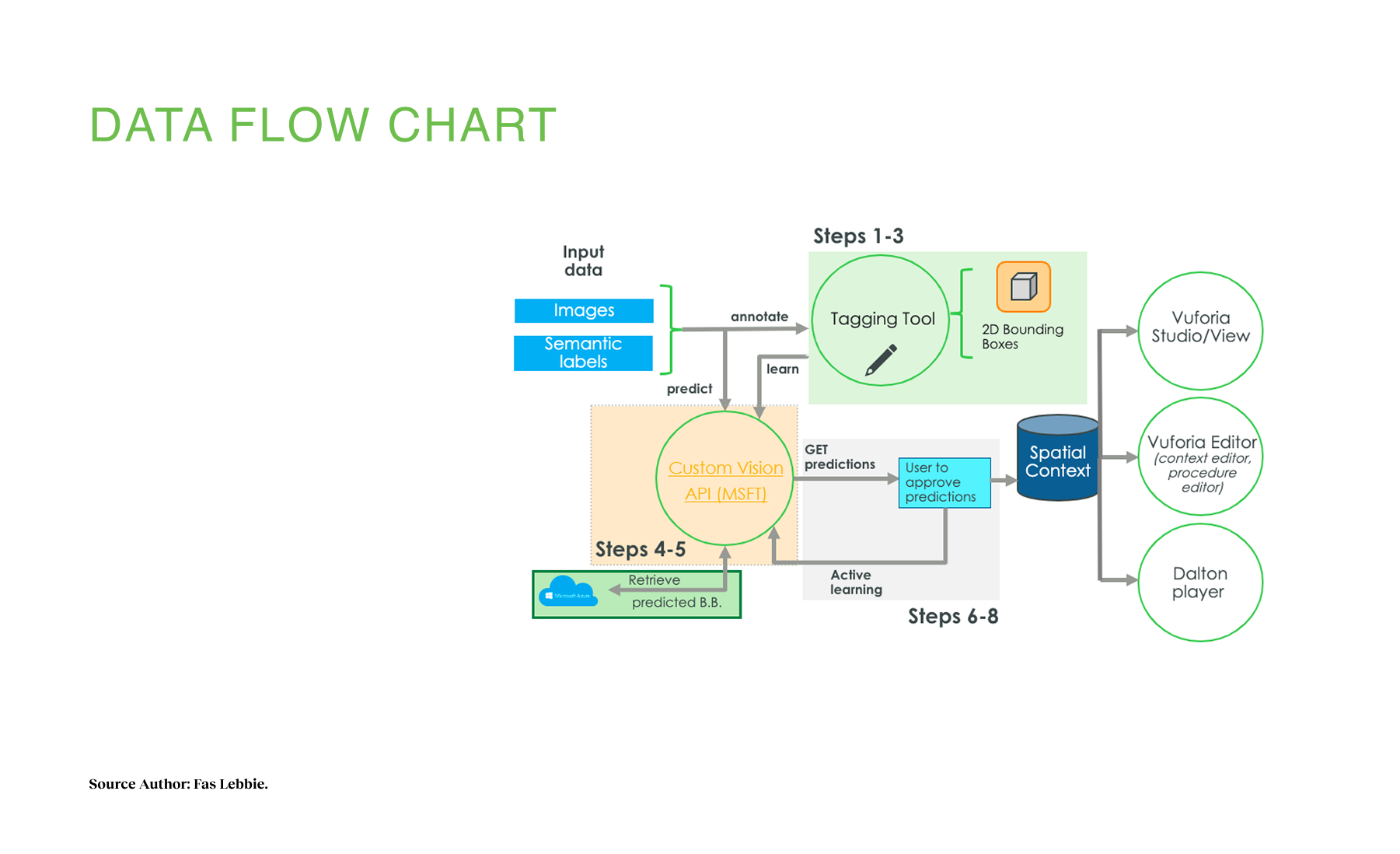

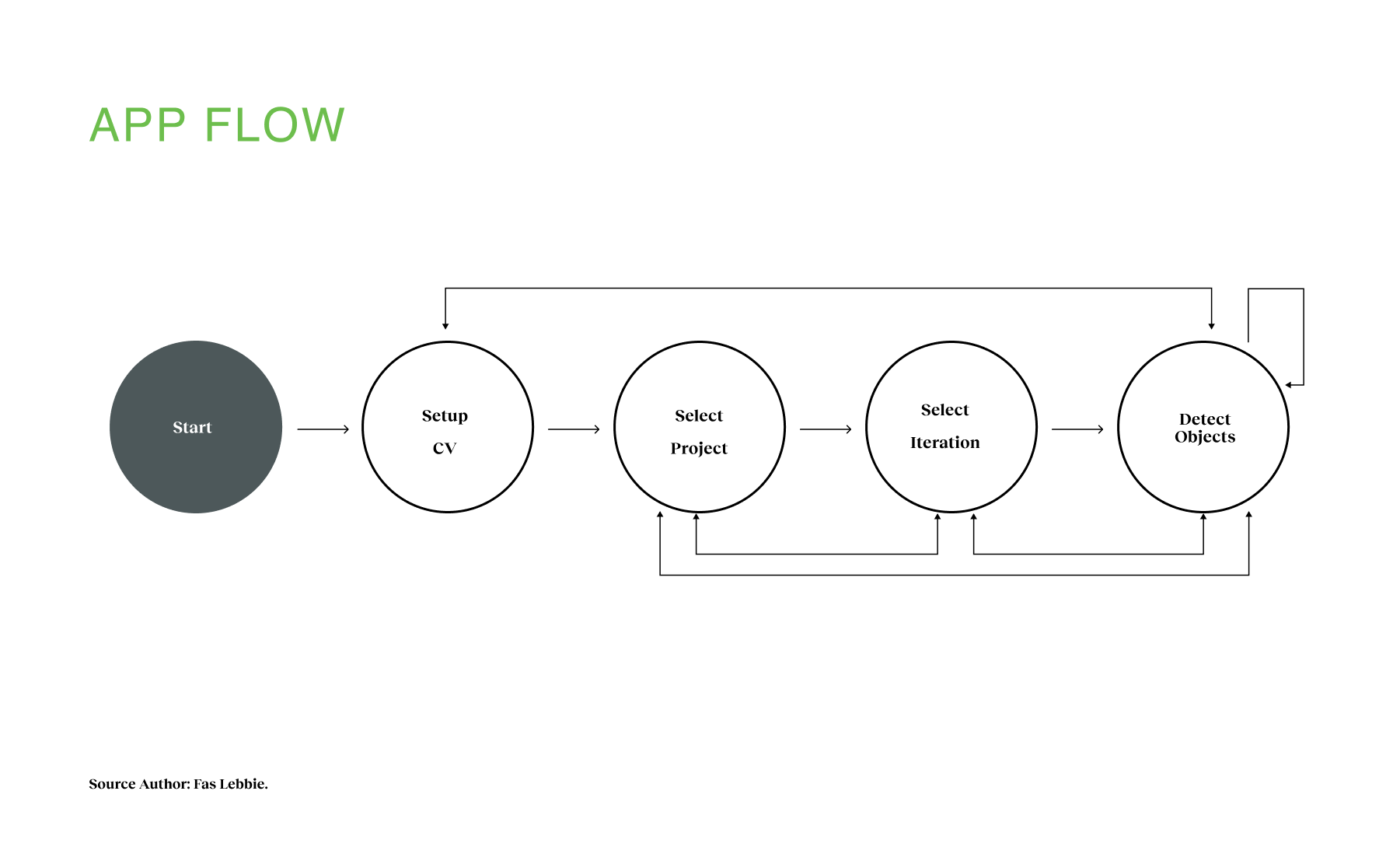

Our prototyping process developed a seamless user experience across multiple platforms while building a robust AI recognition system. We created a detailed user flow sequence that included both the annotation and training phases:

Annotation Flow (Steps 1-3):

- Take a screenshot with a mobile app that has a class 1 object (e.g., screwdriver).

- Annotate in the mobile app (draw a bounding box).

- Send annotated images to the Azure web server.

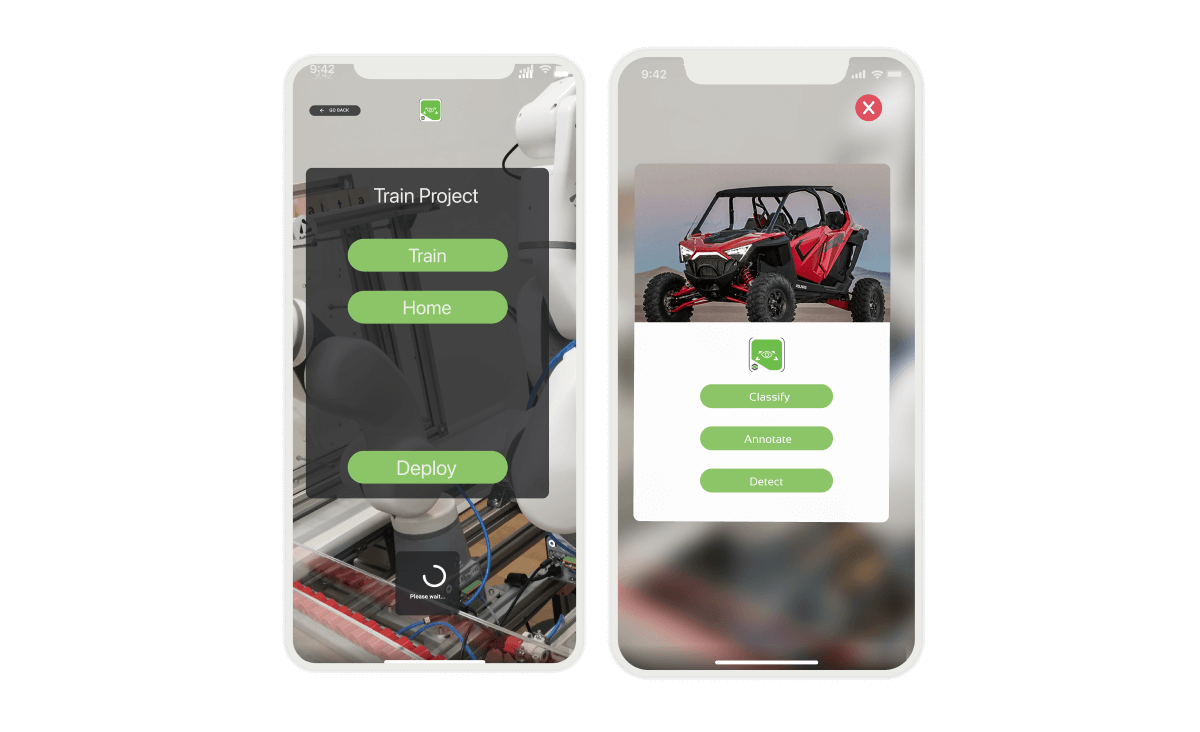

Training Flow (Steps 4-8):

4. Train in Azure Custom Vision server (advanced training may take hours).

5. Export trained model to iOS/Android,

6. Load the trained model in app project.

7. Predict class in an image taken from the mobile app in real-time.

8. The user accepts, modifies, or rejects the bounding box prediction.

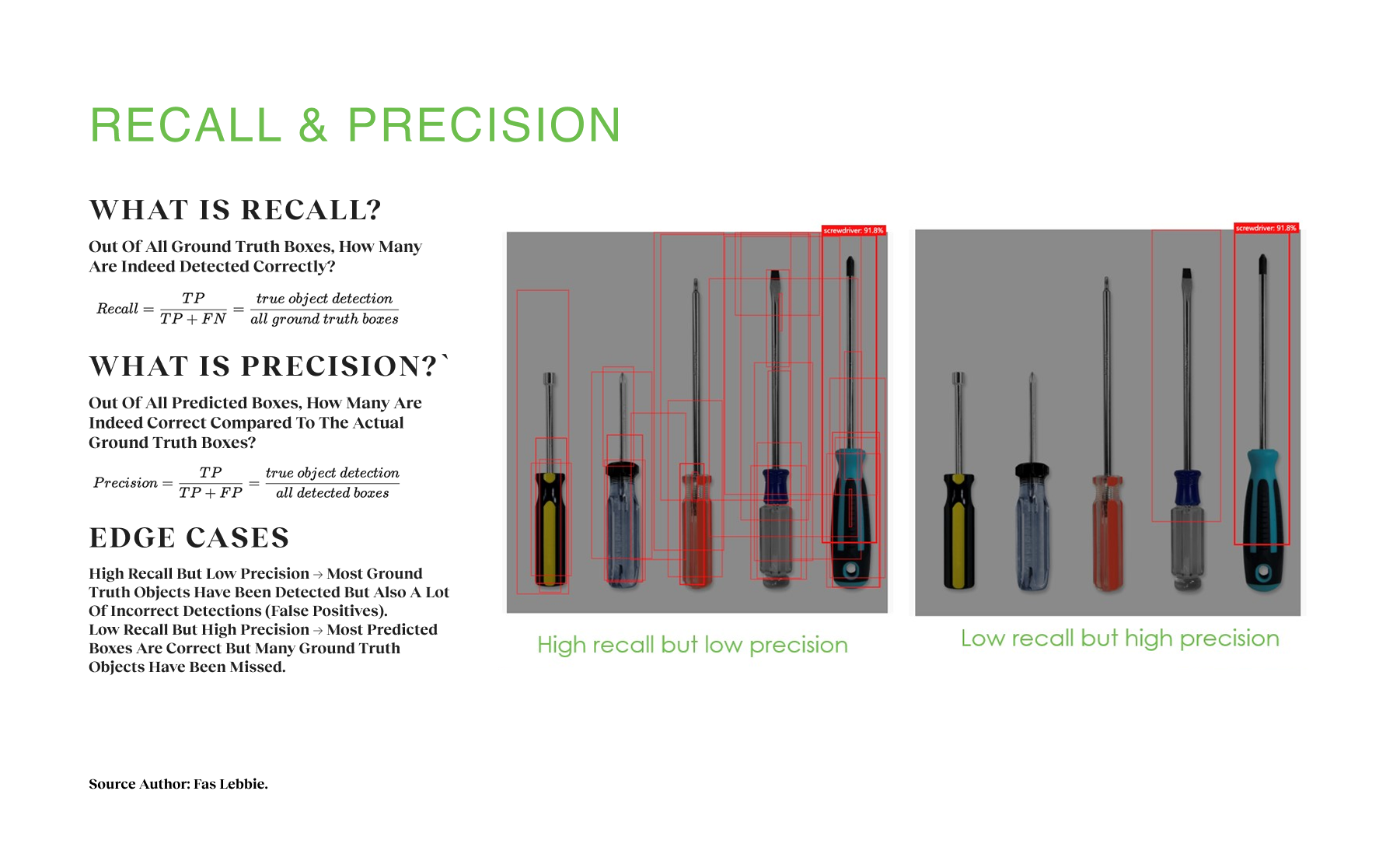

We developed an AI training model for the backend utilizing tools like Onshape, Blender, and ParaSOLID to generate synthetic images and point clouds, which fed into a vanilla AI network for classification. Instead of using traditional annotation methods, we developed the RTO2-3D network, which was trained on both images and point cloud data. For the front-end user experience, I designed high-fidelity interfaces for mobile devices, tablets, and RealWear AR headsets, adhering to platform-specific best practices. For mobile, we focused on 2D interactions, allowing users to act as a magic hand, making inputs into the semi-real world. For tablets, we incorporated placeable pins on CAD models to help users make annotations, ensuring clickable functions were within reach. For RealWear AR, we limited background functions to preserve battery life. Our implementation strategy was structured with clear execution parameters:

Time:

- 6 months for gradual adoption with Vuforia Studio widgets

- 1 year for RTOD services suite

- Across four sprint phases

Budget:

- Approximately $1.7M

Human Resources:

- 7-10 FTE

Working within these parameters, we successfully delivered a product with four unique characteristics:

- Efficient: Instantaneous predictions with constant improvement.

- Versatile: Adaptable to customer environments.

- Persistent: Constant predictions around the 3D space.

- Cross-platform: Integration between Unity Engine and Vuforia Studio.

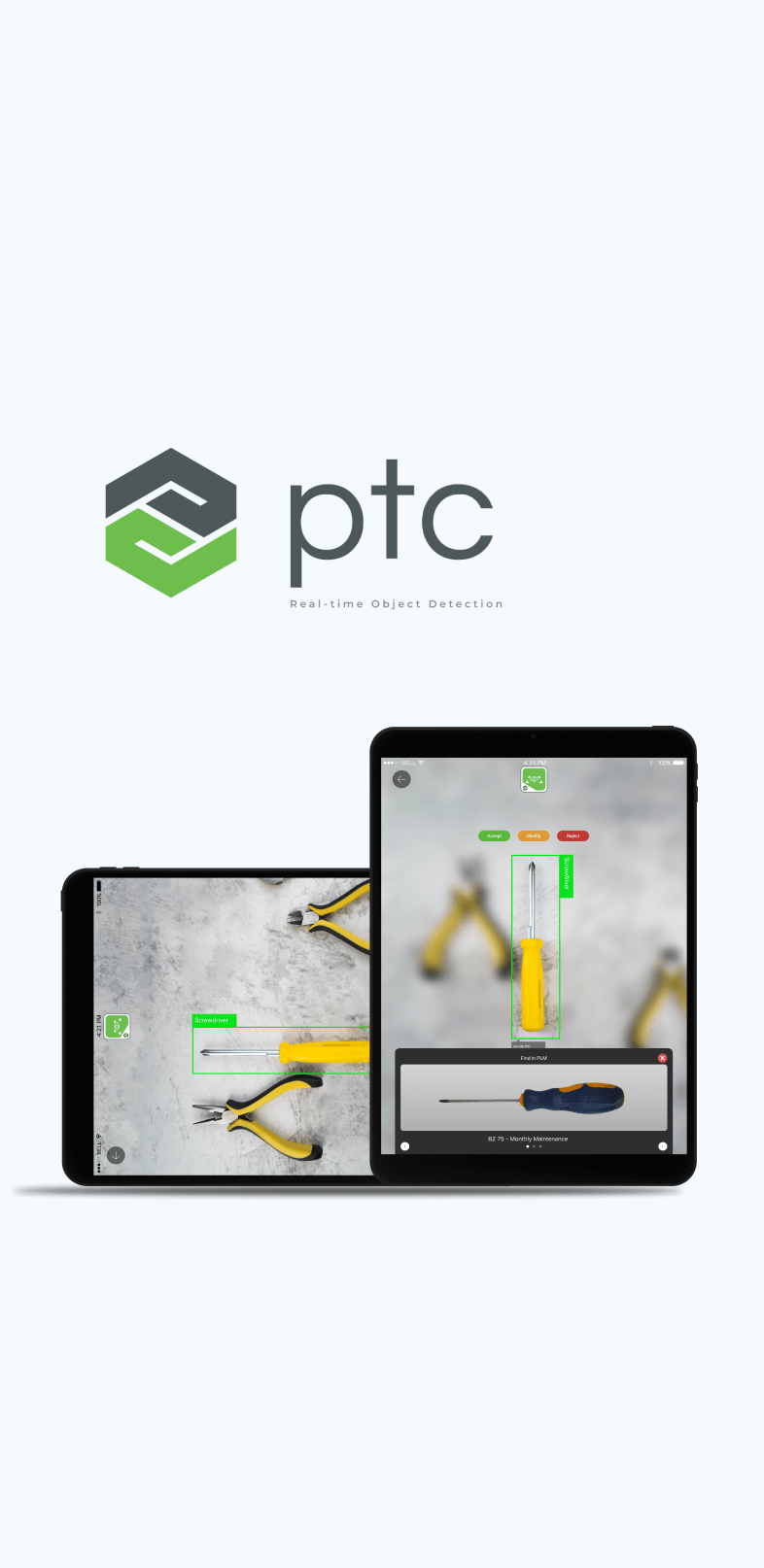

Industrial workers and factory equipment visuals demonstrate real-time part identification capabilities, disrupting the $2 billion market, representing manufacturing technicians gaining instant spare parts recognition efficiency.

Design research uncovered inefficiencies in part placement, spare detection, and logistics, guiding XR solutions that streamline industrial workflows across sectors.

Faster Part Recognition

Technicians identify spare parts four times faster compared to manual catalog searches, delivering instant AI-powered recognition.

Accuracy Rate

Achieved consistently high recognition accuracy across diverse industrial environments.

Reduced onboarding time

AR-assisted workflows accelerate task learning and performance, aligning with broader manufacturing benchmarks.

Reflections & Impact

In our first year, we achieved four significant milestones that confirmed our approach and addressed the $2 billion service part catalog market’s needs. We disrupted traditional practices by developing a digital solution that eliminated physical catalogs and manual searches. Our innovative CAD model classification method enabled automated mapping in PLM systems, while RTOD web services enhanced part classification and recognition. Prominent businesses, including Porsche for mobile quality inspection, Liebherr for product inspection, PUIG for part recognition, and IKEA with the IKEA Place app, quickly adopted our technology. This resulted in improved workflows, allowing technicians to identify parts in 10 languages and reducing ordering mistakes. Our solution’s long-term potential lies in its four unique characteristics: efficiency through machine learning, versatility to adapt to customer feedback, persistence in reliable part recognition across varying conditions, and cross-platform integration with Unity Engine and Vuforia Studio, ensuring broad accessibility for users.

Next Steps

- Expand recognition capabilities by scaling the system beyond the current 20–200 range of parts into hundreds of categories.

- Advance multimodal interaction through voice and gesture controls, enabling more seamless hands-free use in industrial settings.

- Design for field resilience by introducing offline functionality to support technicians in remote or low-connectivity environments.